Dexterous manipulation is bottlenecked by the cost of collecting large-scale, physically feasible demonstrations. Egocentric human videos offer abundant manipulation behaviors at scale, but transforming these human videos into robot-executable demonstrations is challenging due to embodiment appearance difference and feasibility constraints. We propose EgoEngine, a scalable framework for human video-conditioned robot data generation simultanously address human-to-robot visual gap and action gap. Given an egocentric RGB manipulation video, EgoEngine generates paired robot demonstrations: (i) a high-fidelity robot observation video aligned with the generated actions and scene context and (ii) a executable action sequence computed via trajectory optimization that tracks task intent while enforcing kinematic, dynamic, and contact constraints. Our experiments show that EgoEngine enabling robust large-scale dataset construction from human videos, and achieve zero-shot downstream policy learning without any real robot demonstration.

Visual Branch

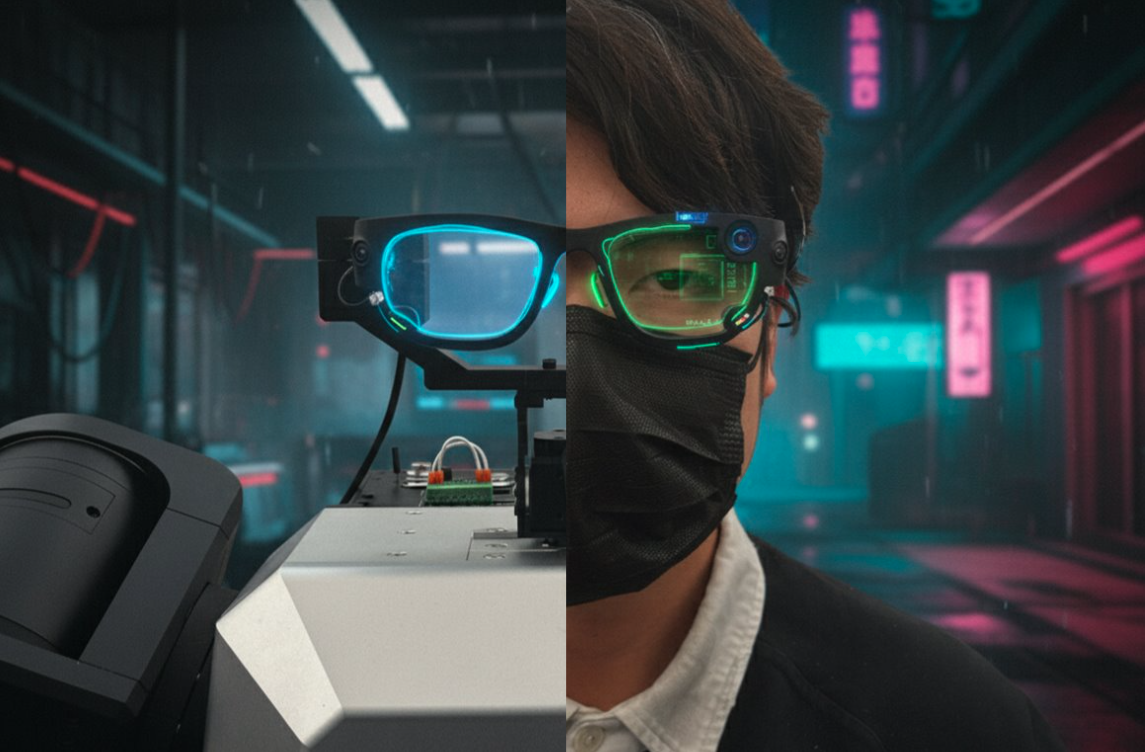

We first show the visual fidelity of generated observations, followed by dataset-scale visualizations from TACO and Aria.

Visual Comparison

Visual Generation

Real-World Visualization

Action Branch

We next present executable action outputs produced by EgoEngine on both TACO and Aria tasks.

Refined Action

Real World

We finally show real-world task results, including paired original human observations and generated robot observations.